Evaluate, control and improve data quality

Corporate processes require business data of high quality. They shall be up to date, consistent and coherent. In reality, however, it is often different. Consequently, successful enterprises are more and more viewing data quality as a process and a strategically important task which it is worth investing in.

Any objective assessment of the business data's quality has to be made from the perspective of all customers. Individual customers can be well satisified with data quality while the evaluation of all aspects returns a negative result.

To speak of good or poor data quality at all, criteria have to be determined based on which the data are measured. Amongst the most important criteria of DQ are:

- Correctness

- Completeness

- Currentness

- Referential integrity

- Consistency

- Uniqueness

- Homogeneity

- Compactness

- Reliability

- Coherency

- Relevance

These criteria should become part of a metric for measuring data quality. Such a metric makes the characteristics of different measurement series comparable and prevents a subjective interpretation of the data quality from a limited point of view.

Successful process model based on RapidRep

Let us now turn to a concrete solution approach with which data in table form or in structured text files can be effectively and efficiently tested for their quality. The approach is tested and proven in practice and very quickly leads to improvements.

For further information please also refer to our brochure

Successfully control data quality (pdf - 639.0 KiB)The business data are for their most part held before data in relational database systems and have to meet technical as well as content requirements. Therefore, it is an advantage when technically and functionally oriented employees work closely together when improving the data quality.

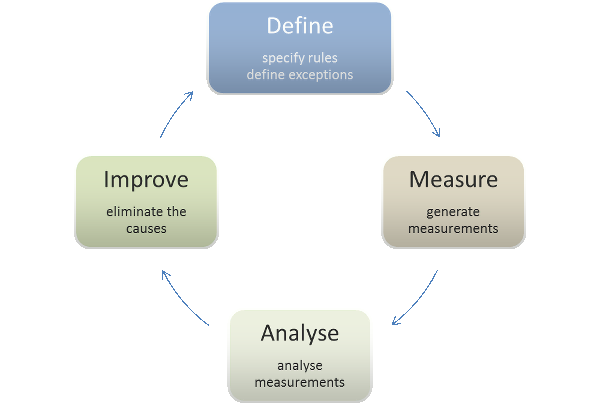

An effective process in improving the data quality thus has to be capable of integrating members from the business and IT departments. In principle, such a process consists of four phases.

Define

Members of the data quality team determine which data sources are to be checked and which properties the business data must have (so called invariants). It can also be helpful, however, to describe exactly those constellations that characterise incorrect or incomplete data.

As an universally accepted and suitable tool, Excel offers itself for defining the rules and for the communication between members from the management and IT departments.

In Excel, the team members define...

- which data (tables, queries or files) shall be tested.

- which tests shall be applied to the data and how the error notification texts read, in case datasets fail the tests.

- threshold values for the number of erroneous datasets, whose exceedance shall effect a notification.

- rules for the individual tests that RapidRep uses to uniquely identify corrupt datasets.

Measure

The defined rules form the logic for measuring the data quality. Analyses on the distribution of data characteristics over time can be used for plausibility. RapidRep performs measurements mechanically and completely automatized. For a continuous quality measurement, RapidRep can be executed periodically (e.g. daily), time-controlled or individually parameterised. RapidRep stores all corrupt datasets to a database. Parameters used when starting RapidRep control the scope of the analyses and mark the results of a measurement, in order to be able to exactly track changes with regards to previous analyses.

Analyse

Now, insights have to be derived from the many measurement results, which can later serve as basis for improvement measures. To this end, RapidRep represents the measurement results in a detailed Excel workbook.

- The survey sheet lists the number of erroneous records per test and contrasts them with the successfully tested records.

- The delta-spreadsheet presents the changes compared to previous analysis runs. If these exceed an absolute or relative threshold value, they get marked in colour.

- For each data source tested, a separate worksheet exists which lists the corrupted records for this data source.

- Another sheet lists all currently valid data quality rules which RapidRep used for the analysis.

Due to the compact and comprehensive presentation, the evaluation of defects can also be done by employees without any technical special knowledge.

Improve

To detect defects, only intensive testing helps. With the approach presented here, the results which a program creates and saves can be made plausible by means of rules.

In order to improve data which are already erroneous, you can define correction rules. Thereby the fact is used that RapidRep knows the unique ID of the rule a dataset broke. For each rule-ID the data quality team can specify which counter-measures, in form of updates, default values etc., can be performed.

Conclusion

With the help of RapidRep, companies in all sectors can sustainably and economically improve the quality of their business data.

At delivery, RapidRep includes a complete sample, which allows you in a short amount of time to test any data sources of your company on their quality. It only lacks your rules.

Please contact us if you are interested in this topic. This solution is certainly employable in your company as well.